The main functionalities provided by afex are:

aov_car(), aov_ez(), and

aov_4() which all fit the same model but differ in the way

to specify the ANOVA model).mixed() provides an interface for mixed models

analysis (estimated via lme4 lmer or

glmer) that automatically obtains p-values for

fixed effects model terms (i.e., main effects and interactions).afex_plot() visualizes results from factorial

experiments combining estimated marginal means and uncertainties

associated with the estimated means in the foreground with a depiction

of the raw data in the background.afex model objects (i.e., ANOVA and mixed models)

can be passed to emmeans for follow-up/post-hoc/planned

contrast analysis.For afex support visit: afex.singmann.science

afex is available from CRAN so the current stable

version can be installed directly via:

install.packages("afex")

To install the latest development version you will need the devtools

package:

devtools::install_github("singmann/afex@master")

To calculate an ANOVA, afex requires the data to be in

the long format (i.e., one row per data point/observation). An ANOVA can

then be calculated via one of three functions that only differ in how

the model components are specified, but not in the output. Note that in

contrast to base lm or aov, afex

ANOVA functions always require the specification of a subject identifier

column (the id-column), because in case there are multiple observations

per participant and cell of the design, these multiple observations are

aggregated (i.e., averaged) per default.

aov_ez the columns containing id variable, dependent

variable, and factors need to be specified as character vectors.aov_car behaves similar to standard aov

and requires the ANOVA to be specified as a formula containing an

Error term (at least to identify the id variable).aov_4 allows the ANOVA to be specified via a formula

similar to lme4::lmer (with one random effects term).A further overview is provided by the vignette.

The following code provides a simple example for an ANOVA with both

between- and within-subject factors. For this we use the

lexical-decision and word naming latencies reported by Freeman,

Heathcote, Chalmers, and Hockley (2010), see also

?fhch2010. As is commonly done, we use the natural

logarithm of the response times, log_rt, as dependent

variable. As independent variable we will consider the between-subjects

factor task ("naming" or

"lexdec") as well as the within-subjects-factors

stimulus ("word" or "nonword")

and length (with 3 levels, 3, 4, or 5 letters).

library("afex")

# examples data set with both within- and between-subjects factors (see ?fhch2010)

data("fhch2010", package = "afex")

fhch <- fhch2010[ fhch2010$correct,] # remove errors

str(fhch2010) # structure of the data

#> 'data.frame': 13222 obs. of 10 variables:

#> $ id : Factor w/ 45 levels "N1","N12","N13",..: 1 1 1 1 1 1 1 1 1 1 ...

#> $ task : Factor w/ 2 levels "naming","lexdec": 1 1 1 1 1 1 1 1 1 1 ...

#> $ stimulus : Factor w/ 2 levels "word","nonword": 1 1 1 2 2 1 2 2 1 2 ...

#> $ density : Factor w/ 2 levels "low","high": 2 1 1 2 1 2 1 1 1 1 ...

#> $ frequency: Factor w/ 2 levels "low","high": 1 2 2 2 2 2 1 2 1 2 ...

#> $ length : Factor w/ 3 levels "4","5","6": 3 3 2 2 1 1 3 2 1 3 ...

#> $ item : Factor w/ 600 levels "abide","acts",..: 363 121 202 525 580 135 42 368 227 141 ...

#> $ rt : num 1.091 0.876 0.71 1.21 0.843 ...

#> $ log_rt : num 0.0871 -0.1324 -0.3425 0.1906 -0.1708 ...

#> $ correct : logi TRUE TRUE TRUE TRUE TRUE TRUE ...# estimate mixed ANOVA on the full design:

aov_ez("id", "log_rt", fhch, between = "task", within = c("stimulus", "length"))

aov_car(log_rt ~ task * stimulus * length + Error(id/(stimulus * length)),

data = fhch)

## equivalent: aov_car(log_rt ~ task + Error(id/(stimulus * length)), data = fhch)

aov_4(log_rt ~ task * stimulus * length + (stimulus * length|id), data = fhch)

## equivalent: aov_4(log_rt ~ task + (stimulus * length|id), data = fhch)

# the three calls return the same ANOVA table:#> Warning: More than one observation per design cell, aggregating data using `fun_aggregate = mean`.

#> To turn off this warning, pass `fun_aggregate = mean` explicitly.

#> Contrasts set to contr.sum for the following variables: task

#> Anova Table (Type 3 tests)

#>

#> Response: log_rt

#> Effect df MSE F ges p.value

#> 1 task 1, 43 0.23 13.38 *** .221 <.001

#> 2 stimulus 1, 43 0.01 173.25 *** .173 <.001

#> 3 task:stimulus 1, 43 0.01 87.56 *** .096 <.001

#> 4 length 1.83, 78.64 0.00 18.55 *** .008 <.001

#> 5 task:length 1.83, 78.64 0.00 1.02 <.001 .358

#> 6 stimulus:length 1.70, 72.97 0.00 1.91 <.001 .162

#> 7 task:stimulus:length 1.70, 72.97 0.00 1.21 <.001 .298

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '+' 0.1 ' ' 1

#>

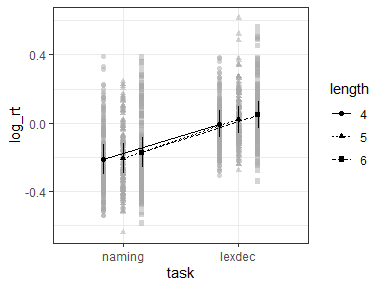

#> Sphericity correction method: GGafex_plotANOVA models can be used for plotting via afex_plot:

a <- aov_ez("id", "log_rt", fhch, between = "task", within = c("stimulus", "length"))

#> Warning: More than one observation per design cell, aggregating data using `fun_aggregate = mean`.

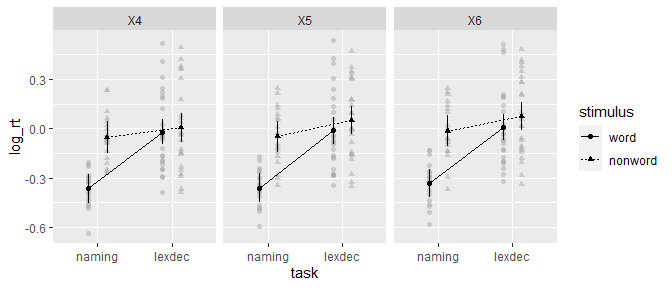

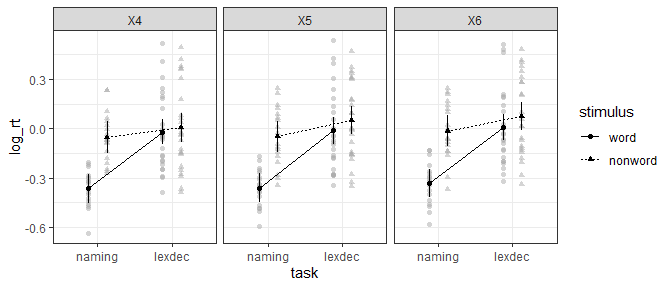

#> To turn off this warning, pass `fun_aggregate = mean` explicitly.afex_plot(a, "task", "stimulus", "length")

#> Warning: Panel(s) show a mixed within-between-design.

#> Error bars do not allow comparisons across all means.

#> Suppress error bars with: error = "none"

afex_plot returns a ggplot2 plot object

which allows simple customization:

library("ggplot2")

afex_plot(a, "task", "stimulus", "length") +

theme_bw()

#> Warning: Panel(s) show a mixed within-between-design.

#> Error bars do not allow comparisons across all means.

#> Suppress error bars with: error = "none"

emmeansFollow-up tests with emmeans need to be specified in two

steps.

emmeans().list and using contrast() or a

convenience function such as pairs().suppressPackageStartupMessages(library("emmeans"))

## set up reference grid using only length

em1 <- emmeans(a, "length")

em1

#> length emmean SE df lower.CL upper.CL

#> X4 -0.1087 0.0299 43 -0.169 -0.04834

#> X5 -0.0929 0.0296 43 -0.153 -0.03310

#> X6 -0.0653 0.0290 43 -0.124 -0.00679

#>

#> Results are averaged over the levels of: task, stimulus

#> Confidence level used: 0.95

## test all pairwise comparisons on reference grid:

pairs(em1)

#> contrast estimate SE df t.ratio p.value

#> X4 - X5 -0.0159 0.00768 43 -2.065 0.1092

#> X4 - X6 -0.0434 0.00782 43 -5.555 <.0001

#> X5 - X6 -0.0276 0.00602 43 -4.583 0.0001

#>

#> Results are averaged over the levels of: task, stimulus

#> P value adjustment: tukey method for comparing a family of 3 estimates

## only test specified tests

con <- list(

"4vs5" = c(-1, 1, 0),

"5vs6" = c(0, -1, 1)

)

contrast(em1, con, adjust = "holm")

#> contrast estimate SE df t.ratio p.value

#> 4vs5 0.0159 0.00768 43 2.065 0.0449

#> 5vs6 0.0276 0.00602 43 4.583 0.0001

#>

#> Results are averaged over the levels of: task, stimulus

#> P value adjustment: holm method for 2 testsFunction mixed() fits a mixed model with

lme4::lmer (or lme4::glmer if a

family argument is passed) and then calculates

p-values for fixed effects model terms using a variety of

methods. The formula to mixed needs to be the same as in a

call to lme4::lmer. The default method for calculation of

p-values is 'S' (Satterthwaite) which only works

for linear mixed models (i.e., no family argument). A

similar method that provides a somewhat better control of Type I errors

for small data sets is 'KR' (Kenward-Roger), but it can

require considerable RAM and time. Other methods are , similar to

'KR' but requires less RAM), 'PB' (parametric

bootstrap), and 'LRT' (likelihood-ratio test).

More examples are provided in the vignette,

here we use the same example data as above, the lexical decision and

word naming latencies collected by Freeman et al. (2010). To avoid long

computation times we only consider the two factors task and

length (omitting stimulus is probably not a

fully sensible model). Because mixed models easily allow it, we will

consider crossed-random effects for participants (id) and

items (tem).

library("afex")

# examples data set with both within- and between-subjects factors (see ?fhch2010)

data("fhch2010", package = "afex")

fhch <- fhch2010[ fhch2010$correct,] # remove errors

str(fhch2010) # structure of the data

#> 'data.frame': 13222 obs. of 10 variables:

#> $ id : Factor w/ 45 levels "N1","N12","N13",..: 1 1 1 1 1 1 1 1 1 1 ...

#> $ task : Factor w/ 2 levels "naming","lexdec": 1 1 1 1 1 1 1 1 1 1 ...

#> $ stimulus : Factor w/ 2 levels "word","nonword": 1 1 1 2 2 1 2 2 1 2 ...

#> $ density : Factor w/ 2 levels "low","high": 2 1 1 2 1 2 1 1 1 1 ...

#> $ frequency: Factor w/ 2 levels "low","high": 1 2 2 2 2 2 1 2 1 2 ...

#> $ length : Factor w/ 3 levels "4","5","6": 3 3 2 2 1 1 3 2 1 3 ...

#> $ item : Factor w/ 600 levels "abide","acts",..: 363 121 202 525 580 135 42 368 227 141 ...

#> $ rt : num 1.091 0.876 0.71 1.21 0.843 ...

#> $ log_rt : num 0.0871 -0.1324 -0.3425 0.1906 -0.1708 ...

#> $ correct : logi TRUE TRUE TRUE TRUE TRUE TRUE ...For the random-effects grouping factors we begin with the maximal

random effect structure justified by the design (see Barr, Levy,

Scheepers, & Tily, 2013). In this case this is by-subject random

intercepts and by-subjects random slopes for stimulus and

by-item random intercepts and by-item random slopes for

task.

m1 <- mixed(log_rt ~ task * length + (length | id) + (task | item),

fhch)

#> Contrasts set to contr.sum for the following variables: task, length, id, item

#> boundary (singular) fit: see help('isSingular')Fitting this model produces a critical convergence warning, that the fit is singular. This warning usually indicates that the data does not provide enough information for the request random effect parameters. In a real analysis it would therefore be a good idea to iteratively reduce the random effect structure until the warning disappears. A good first step would be to remove the correlations among random effect terms as shown below.

This warning is also shown if we simply print the model object, but

not if we call the nice() method.

m1

#> Warning: lme4 reported (at least) the following warnings for 'full':

#> * boundary (singular) fit: see help('isSingular')

#> Mixed Model Anova Table (Type 3 tests, S-method)

#>

#> Model: log_rt ~ task * length + (length | id) + (task | item)

#> Data: fhch

#> Effect df F p.value

#> 1 task 1, 44.79 13.47 *** <.001

#> 2 length 2, 325.77 6.03 ** .003

#> 3 task:length 2, 303.20 0.33 .722

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '+' 0.1 ' ' 1

nice(m1)

#> Mixed Model Anova Table (Type 3 tests, S-method)

#>

#> Model: log_rt ~ task * length + (length | id) + (task | item)

#> Data: fhch

#> Effect df F p.value

#> 1 task 1, 44.79 13.47 *** <.001

#> 2 length 2, 325.77 6.03 ** .003

#> 3 task:length 2, 303.20 0.33 .722

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '+' 0.1 ' ' 1If we call the anova() method a slightly different

output is shown in which the p-values are not rounded in the same way

and the warning is shown again.

anova(m1)

#> Warning: lme4 reported (at least) the following warnings for 'full':

#> * boundary (singular) fit: see help('isSingular')

#> Mixed Model Anova Table (Type 3 tests, S-method)

#>

#> Model: log_rt ~ task * length + (length | id) + (task | item)

#> Data: fhch

#> num Df den Df F Pr(>F)

#> task 1 44.787 13.4672 0.0006432 ***

#> length 2 325.766 6.0252 0.0026947 **

#> task:length 2 303.203 0.3263 0.7218141

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1We can also get the default lme4 output if we call the

summary method. However, note that in contrast to the

previous methods, results are shown for factor-levels and not

model-terms which is usually not interpretable for factors with more

than two levels. This is the case for length here. The

problem is that factors with \(k\)

levels are mapped to \(k-1\) parameters

and at the same time the intercept represent the (unweighted) grand

mean. This means that factor-levels cannot be mapped in a 1-to-1 manner

to the parameters and thus cannot be uniquely interpreted.

summary(m1)

#> Linear mixed model fit by REML. t-tests use Satterthwaite's method [

#> lmerModLmerTest]

#> Formula: log_rt ~ task * length + (length | id) + (task | item)

#> Data: data

#>

#> REML criterion at convergence: 7624.2

#>

#> Scaled residuals:

#> Min 1Q Median 3Q Max

#> -5.9268 -0.5900 -0.1018 0.4789 5.2673

#>

#> Random effects:

#> Groups Name Variance Std.Dev. Corr

#> item (Intercept) 0.0115706 0.10757

#> task1 0.0104571 0.10226 0.47

#> id (Intercept) 0.0374107 0.19342

#> length1 0.0003297 0.01816 0.16

#> length2 0.0001009 0.01005 0.11 -0.96

#> Residual 0.0925505 0.30422

#> Number of obs: 12960, groups: item, 600; id, 45

#>

#> Fixed effects:

#> Estimate Std. Error df t value Pr(>|t|)

#> (Intercept) -0.089098 0.029471 44.978712 -3.023 0.004120 **

#> task1 -0.108035 0.029439 44.786596 -3.670 0.000643 ***

#> length1 -0.020756 0.007810 226.899942 -2.658 0.008425 **

#> length2 -0.003746 0.007467 380.165727 -0.502 0.616216

#> task1:length1 0.005719 0.007568 206.605639 0.756 0.450707

#> task1:length2 -0.004628 0.007214 353.152953 -0.641 0.521632

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Correlation of Fixed Effects:

#> (Intr) task1 lngth1 lngth2 tsk1:1

#> task1 0.118

#> length1 0.056 0.007

#> length2 0.021 0.002 -0.526

#> tsk1:lngth1 0.007 0.058 0.329 -0.173

#> tsk1:lngth2 0.003 0.022 -0.174 0.349 -0.528

#> optimizer (nloptwrap) convergence code: 0 (OK)

#> boundary (singular) fit: see help('isSingular')Because of the singular fit warning, we reduce the random effect

structure. Usually a good starting point is removing the correlations

among the random effects parameters. This can be done in

afex::mixed even for factors by combining the double bar

notation || with expand_re = TRUE. We do so

for both random effects terms.

m2 <- mixed(log_rt ~ task * length + (length || id) + (task || item),

fhch, expand_re = TRUE)

#> Contrasts set to contr.sum for the following variables: task, length, id, item

#> boundary (singular) fit: see help('isSingular')However, the singular fit warning remains. We therefore inspect the random effect estimates to see which random effect parameter is estimated to be near to zero.

summary(m2)$varcor

#> Groups Name Std.Dev.

#> item re2.task1 0.101196

#> item.1 (Intercept) 0.106845

#> id re1.length2 0.000000

#> id.1 re1.length1 0.012291

#> id.2 (Intercept) 0.193395

#> Residual 0.304374As shown above, one parameter of the by-participant random slope for

length is estimated to be almost zero,

re1.length2. We therefore remove the by-participant random

slope for length in the next model which does not show any

convergence warnings.

m3 <- mixed(log_rt ~ task * length + (1 | id) + (task || item),

fhch, expand_re = TRUE)

#> Contrasts set to contr.sum for the following variables: task, length, id, item

m3

#> Mixed Model Anova Table (Type 3 tests, S-method)

#>

#> Model: log_rt ~ task * length + (1 | id) + (task || item)

#> Data: fhch

#> Effect df F p.value

#> 1 task 1, 44.74 13.52 *** <.001

#> 2 length 2, 597.20 6.67 ** .001

#> 3 task:length 2, 592.82 0.40 .668

#> ---

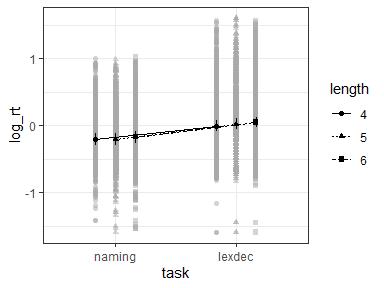

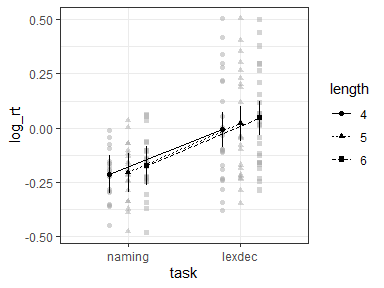

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '+' 0.1 ' ' 1afex_plotObjects returned by mixed can be used for plotting with

afex_plot. However, two things need to be considered.

id argument of afex_plot allows

specifying over which random effects grouping factors the data plotted

in the background should be averaged over. Per default this uses all

random effects grouping factors. In the present case this would mean

that all data points are shown resulting in a very busy plot. When

choosing only one of the random effects grouping factor, data points in

the background show average response for each level of that factor. For

example, when setting id = "id" here each data point in

the background shows the mean log_rt of one participant

(i.e., level of id).emmeans which per default attempts to estimate the degrees

of freedom using the expensive Kenward-Roger method unless the number of

data points is high (as here). This can produce quite some status

messages (not shown here). Use

emmeans::emm_options(lmer.df = "asymptotic") to suppress

this calculation.library("ggplot2")

## all data points shown

afex_plot(m3, "task", "length") +

theme_bw()

## data points show IDs

afex_plot(m3, "task", "length", id = "id") +

theme_bw()

## data points show items

afex_plot(m3, "task", "length", id = "item") +

theme_bw()

emmeansFollow-up tests with emmeans need to be specified in two

steps.

emmeans().list and using contrast() or a

convenience function such as pairs().For mixed models, emmeans attempts to estimate the

degrees of freedom. The method can be set via

emm_options(lmer.df = ...). Here we use

"asymptotic" which does not estimate the degrees of

freedom, but sets them to infinity.

library("emmeans")

emm_options(lmer.df = "asymptotic")

## set up reference grid using only length

em2 <- emmeans(m3, "length")

#> NOTE: Results may be misleading due to involvement in interactions

em2

#> length emmean SE df asymp.LCL asymp.UCL

#> 4 -0.1099 0.0304 Inf -0.169 -0.05040

#> 5 -0.0924 0.0304 Inf -0.152 -0.03296

#> 6 -0.0642 0.0304 Inf -0.124 -0.00469

#>

#> Results are averaged over the levels of: task

#> Degrees-of-freedom method: asymptotic

#> Confidence level used: 0.95

## test all pairwise comparisons on reference grid:

pairs(em2)

#> contrast estimate SE df z.ratio p.value

#> length4 - length5 -0.0175 0.0126 Inf -1.384 0.3495

#> length4 - length6 -0.0457 0.0126 Inf -3.618 0.0009

#> length5 - length6 -0.0282 0.0126 Inf -2.238 0.0649

#>

#> Results are averaged over the levels of: task

#> Degrees-of-freedom method: asymptotic

#> P value adjustment: tukey method for comparing a family of 3 estimates

## only test specified tests

con <- list(

"4vs5" = c(-1, 1, 0),

"5vs6" = c(0, -1, 1)

)

contrast(em2, con, adjust = "holm")

#> contrast estimate SE df z.ratio p.value

#> 4vs5 0.0175 0.0126 Inf 1.384 0.1665

#> 5vs6 0.0282 0.0126 Inf 2.238 0.0504

#>

#> Results are averaged over the levels of: task

#> Degrees-of-freedom method: asymptotic

#> P value adjustment: holm method for 2 testsBarr, D. J., Levy, R., Scheepers, C., & Tily, H. J. (2013). Random effects structure for confirmatory hypothesis testing: Keep it maximal. Journal of Memory and Language, 68(3), 255-278. https://doi.org/10.1016/j.jml.2012.11.001

Freeman, E., Heathcote, A., Chalmers, K., & Hockley, W. (2010). Item effects in recognition memory for words. Journal of Memory and Language, 62(1), 1-18. https://doi.org/10.1016/j.jml.2009.09.004

Please note that afex is released with a Contributor

Code of Conduct. By contributing to this project, you agree to abide

by its terms.